A Robust Vision-based Algorithm for Classifying and Tracking Small Orbital Debris Using On-board Optical Cameras

This study develops a vision-based classification and tracking algorithm to address the challenges of in-situ small orbital debris environment tracking, including debris observability and instrument requirements for small debris observation. The algorithm operates in near real-time and is robust under challenging tasks in moving objects tracking such as multiple moving objects, objects with various movement trajectories and speeds, very small or faint objects, and substantial background motion. The performance of the algorithm is optimized and validated using space image data available through simulated environments generated using NASA Marshall Space Flight Center’s Dynamic Star Field Simulator of on-board optical sensors and cameras.

code data

Sparse Variable Generalized Linear Model (SVGLM)

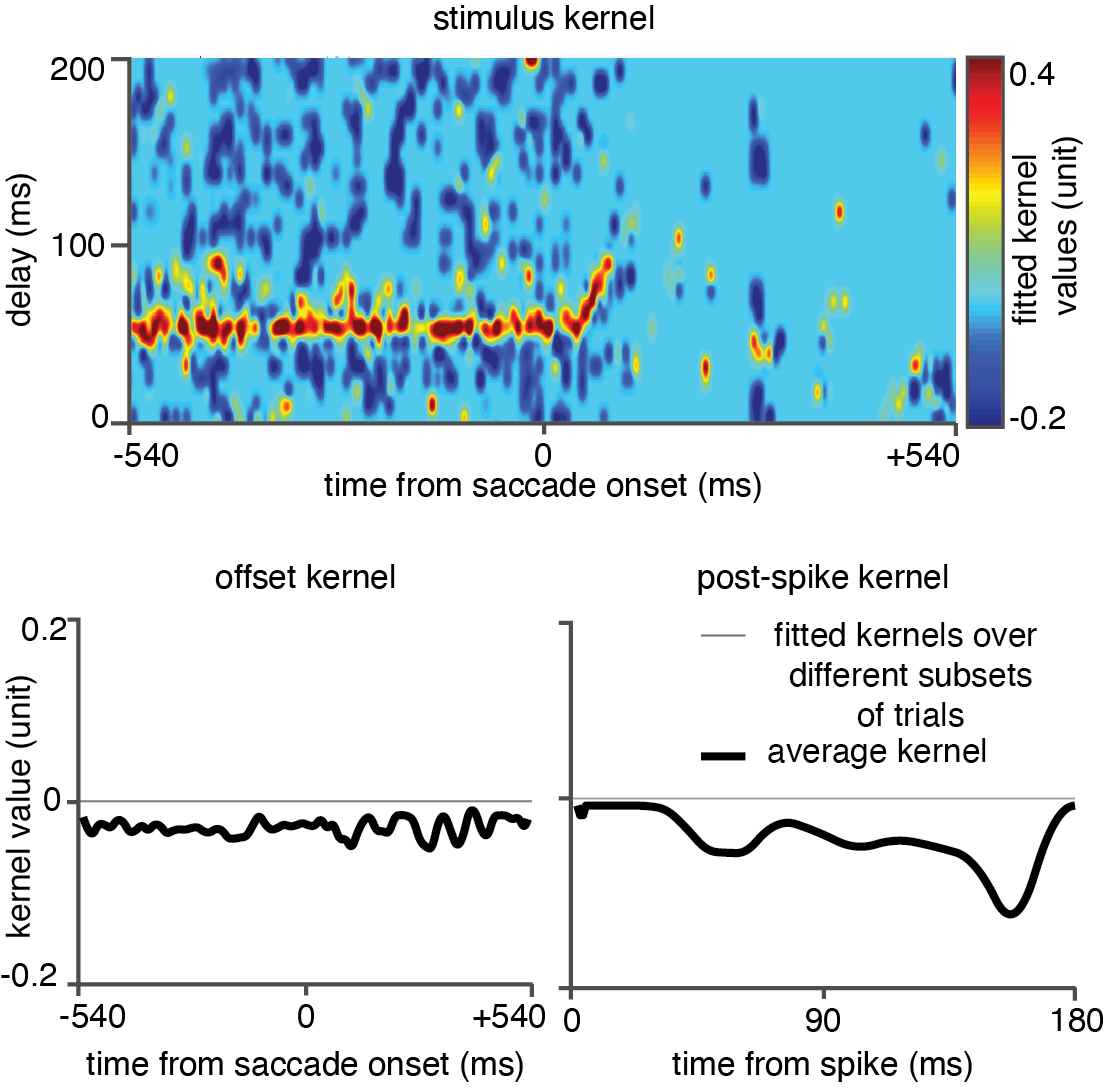

The sparse variable generalized linear model framework, termed theSVGLM, which is able to track the saccade-induced rapid changes occurring in the spatiotemporal sensitivity of the neurons on a millisecond timescale. The principal idea of theSVGLM is that the stimulus-response relationship in a neuron is characterized by a set of time-varying stimulus kernels, which represent the spatiotemporal receptive field of the neuron as varying along the time dimension.

Analyzing the Computational Complexity of a Dynamic Model of the Time-varying Spatiotemporal Sensitivity of Neurons in the Visual Cortex

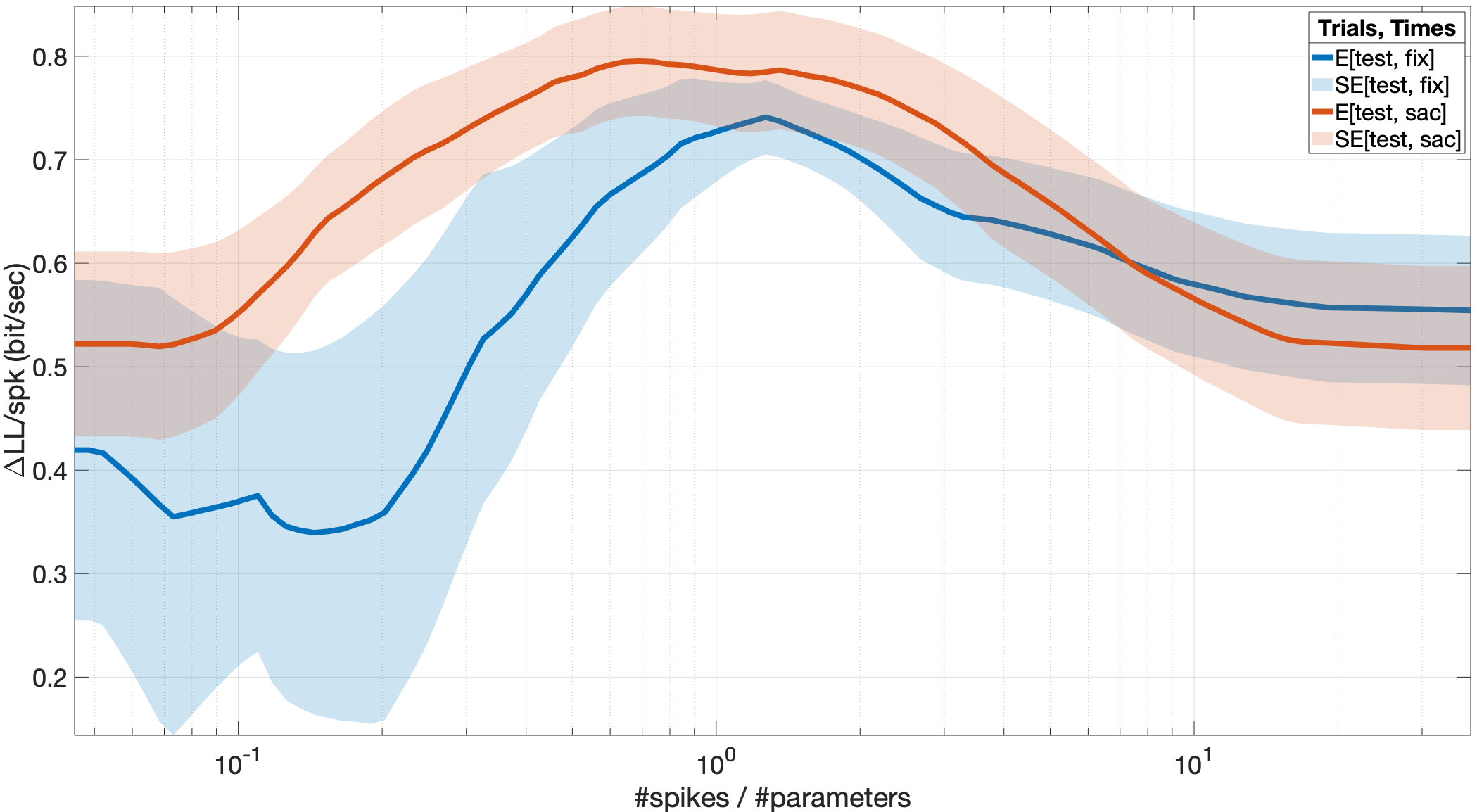

In order to precisely track the dynamics of visual information encoding during saccadic eye movements, we have recently developed a computational model applicable to the sparse, limited neural data available during the short timescale of saccades. The time-varying stimulus kernels estimated by the model can provide a precise description of the neurons’ dynamic spatiotemporal sensitivity with high precision during a saccade. Achieving this precision however requires handling a trade-off between the computational complexity, generalizability, and prediction accuracy of the encoding model. In this paper, we derive a principled strategy for the model parameterization to handle this trade-off, which is particularly critical for estimating the high-dimensional models using sparse neuronal data. The results of this analysis can guide optimizing various dimensionality reduction and model parameterization approaches used for the estimation of the generalized linear model-based frameworks applied on spiking data beyond the data and approaches used in this study.

code data paper

Saccade Target Remapping

Trace extrastriate response changes and decompose them into their constituent components, using a combined physiological and computational approach.

Using temporally and spatially precise measurement of V4 and MT RFs around the time of saccades, we have discovered a novel extrastriate mechanism for the maintenance of visual information across saccades. A subpopulation of extrastriate neurons shows persistent activity, maintaining the information from their original RF across the saccade. We also observe saccade target (ST) and future field (FF) remapping, in which neurons preemptively shift their sensitivity towards the saccade target or their future RF. In order to examine the sufficiency of these neural changes for visual stability, we develop a novel extension of the generalized linear model framework to capture perisaccadic changes in neurons’ visual responses and decompose perisaccadic responses at each time point into multiple independent sources.

code

Mislocalization

Examine the Link Between Persistent Activity in Extrastriate Areas and the Biases in Spatial Perception During Saccades.

Our modeling approach provides a powerful means for predicting perisaccadic perceptual phenomena and then testing the contribution of each neurophysiological component in generating them, thus linking perisaccadic neuronal changes to perceptual changes during eye movements. In psychophysical experiments, humans mislocalize stimuli appearing just prior to or during saccades. Here, we show the same psychophysical perisaccadic mislocalization phenomena in monkeys. This allows us to directly tie reported localizations to the responses of visual neurons during the behavioral task, and so evaluate the role of the various perisaccadic changes (FF & ST remapping, and persistent activity) to the perceived stimulus location on a trial by trial basis. We can further compare behavioral reports to the model-generated readout based on neuronal responses, allowing us to tie perisaccadic neuronal changes to their perceptual counterpart.

code